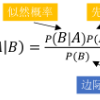

With the increasing intelligence and integration, a great number of two-valued variables (generally stored in the form of 0 or 1 value) often exist in large-scale industrial processes. However, these variables cannot be effectively handled by traditional monitoring methods such as LDA, PCA and PLS. Recently, a mixed hidden naive Bayesian model (MHNBM) is developed for the first time to utilize both two-valued and continuous variables for abnormality monitoring. Although MHNBM is effective, it still has some shortcomings that need to be improved. For MHNBM, the variables with greater correlation to other variables have greater weights, which cannot guarantee greater weights are assigned to the more discriminating variables. In addition, the conditional probability must be computed based on the historical data. When the training data is scarce, the conditional probability between continuous variables tends to be uniformly distributed, which affects the performance of MHNBM. Here a novel feature weighted mixed naive Bayes model (FWMNBM) is developed to overcome the above shortcomings. For FWMNBM, the variables that are more correlated to the class have greater weights, which makes the more discriminating variables contribute more to the model. At the same time, FWMNBM does not have to calculate the conditional probability between variables, thus it is less restricted by the number of training data samples. Compared with MHNBM, FWMNBM has better performance, and its effectiveness is validated through the numerical cases of a simulation example and a practical case of Zhoushan thermal power plant (ZTPP), China.

相關內容

Runtime verification or runtime monitoring equips safety-critical cyber-physical systems to augment design assurance measures and ensure operational safety and security. Cyber-physical systems have interaction failures, attack surfaces, and attack vectors resulting in unanticipated hazards and loss scenarios. These interaction failures pose challenges to runtime verification regarding monitoring specifications and monitoring placements for in-time detection of hazards. We develop a well-formed workflow model that connects system theoretic process analysis, commonly referred to as STPA, hazard causation information to lower-level runtime monitoring to detect hazards at the operational phase. Specifically, our model follows the DepDevOps paradigm to provide evidence and insights to runtime monitoring on what to monitor, where to monitor, and the monitoring context. We demonstrate and evaluate the value of multilevel monitors by injecting hazards on an autonomous emergency braking system model.

Applications of Reinforcement Learning (RL), in which agents learn to make a sequence of decisions despite lacking complete information about the latent states of the controlled system, that is, they act under partial observability of the states, are ubiquitous. Partially observable RL can be notoriously difficult -- well-known information-theoretic results show that learning partially observable Markov decision processes (POMDPs) requires an exponential number of samples in the worst case. Yet, this does not rule out the existence of large subclasses of POMDPs over which learning is tractable. In this paper we identify such a subclass, which we call weakly revealing POMDPs. This family rules out the pathological instances of POMDPs where observations are uninformative to a degree that makes learning hard. We prove that for weakly revealing POMDPs, a simple algorithm combining optimism and Maximum Likelihood Estimation (MLE) is sufficient to guarantee polynomial sample complexity. To the best of our knowledge, this is the first provably sample-efficient result for learning from interactions in overcomplete POMDPs, where the number of latent states can be larger than the number of observations.

Deep neural networks have become an integral part of our software infrastructure and are being deployed in many widely-used and safety-critical applications. However, their integration into many systems also brings with it the vulnerability to test time attacks in the form of Universal Adversarial Perturbations (UAPs). UAPs are a class of perturbations that when applied to any input causes model misclassification. Although there is an ongoing effort to defend models against these adversarial attacks, it is often difficult to reconcile the trade-offs in model accuracy and robustness to adversarial attacks. Jacobian regularization has been shown to improve the robustness of models against UAPs, whilst model ensembles have been widely adopted to improve both predictive performance and model robustness. In this work, we propose a novel approach, Jacobian Ensembles-a combination of Jacobian regularization and model ensembles to significantly increase the robustness against UAPs whilst maintaining or improving model accuracy. Our results show that Jacobian Ensembles achieves previously unseen levels of accuracy and robustness, greatly improving over previous methods that tend to skew towards only either accuracy or robustness.

This paper establishes the asymptotic independence between the quadratic form and maximum of a sequence of independent random variables. Based on this theoretical result, we find the asymptotic joint distribution for the quadratic form and maximum, which can be applied into the high-dimensional testing problems. By combining the sum-type test and the max-type test, we propose the Fisher's combination tests for the one-sample mean test and two-sample mean test. Under this novel general framework, several strong assumptions in existing literature have been relaxed. Monte Carlo simulation has been done which shows that our proposed tests are strongly robust to both sparse and dense data.

Existing inferential methods for small area data involve a trade-off between maintaining area-level frequentist coverage rates and improving inferential precision via the incorporation of indirect information. In this article, we propose a method to obtain an area-level prediction region for a future observation which mitigates this trade-off. The proposed method takes a conformal prediction approach in which the conformity measure is the posterior predictive density of a working model that incorporates indirect information. The resulting prediction region has guaranteed frequentist coverage regardless of the working model, and, if the working model assumptions are accurate, the region has minimum expected volume compared to other regions with the same coverage rate. When constructed under a normal working model, we prove such a prediction region is an interval and construct an efficient algorithm to obtain the exact interval. We illustrate the performance of our method through simulation studies and an application to EPA radon survey data.

The stochastic gradient Langevin Dynamics is one of the most fundamental algorithms to solve sampling problems and non-convex optimization appearing in several machine learning applications. Especially, its variance reduced versions have nowadays gained particular attention. In this paper, we study two variants of this kind, namely, the Stochastic Variance Reduced Gradient Langevin Dynamics and the Stochastic Recursive Gradient Langevin Dynamics. We prove their convergence to the objective distribution in terms of KL-divergence under the sole assumptions of smoothness and Log-Sobolev inequality which are weaker conditions than those used in prior works for these algorithms. With the batch size and the inner loop length set to $\sqrt{n}$, the gradient complexity to achieve an $\epsilon$-precision is $\tilde{O}((n+dn^{1/2}\epsilon^{-1})\gamma^2 L^2\alpha^{-2})$, which is an improvement from any previous analyses. We also show some essential applications of our result to non-convex optimization.

In this paper we study the finite sample and asymptotic properties of various weighting estimators of the local average treatment effect (LATE), several of which are based on Abadie (2003)'s kappa theorem. Our framework presumes a binary endogenous explanatory variable ("treatment") and a binary instrumental variable, which may only be valid after conditioning on additional covariates. We argue that one of the Abadie estimators, which we show is weight normalized, is likely to dominate the others in many contexts. A notable exception is in settings with one-sided noncompliance, where certain unnormalized estimators have the advantage of being based on a denominator that is bounded away from zero. We use a simulation study and three empirical applications to illustrate our findings. In applications to causal effects of college education using the college proximity instrument (Card, 1995) and causal effects of childbearing using the sibling sex composition instrument (Angrist and Evans, 1998), the unnormalized estimates are clearly unreasonable, with "incorrect" signs, magnitudes, or both. Overall, our results suggest that (i) the relative performance of different kappa weighting estimators varies with features of the data-generating process; and that (ii) the normalized version of Tan (2006)'s estimator may be an attractive alternative in many contexts. Applied researchers with access to a binary instrumental variable should also consider covariate balancing or doubly robust estimators of the LATE.

Selecting the most suitable algorithm and determining its hyperparameters for a given optimization problem is a challenging task. Accurately predicting how well a certain algorithm could solve the problem is hence desirable. Recent studies in single-objective numerical optimization show that supervised machine learning methods can predict algorithm performance using landscape features extracted from the problem instances. Existing approaches typically treat the algorithms as black-boxes, without consideration of their characteristics. To investigate in this work if a selection of landscape features that depends on algorithms properties could further improve regression accuracy, we regard the modular CMA-ES framework and estimate how much each landscape feature contributes to the best algorithm performance regression models. Exploratory data analysis performed on this data indicate that the set of most relevant features does not depend on the configuration of individual modules, but the influence that these features have on regression accuracy does. In addition, we have shown that by using classifiers that take the features relevance on the model accuracy, we are able to predict the status of individual modules in the CMA-ES configurations.

Current works in the generation of personalized dialogue primarily contribute to the agent avoiding contradictory persona and driving the response more informative. However, we found that the generated responses from these models are mostly self-centered with little care for the other party since they ignore the user's persona. Moreover, we consider high-quality transmission is essentially built based on apprehending the persona of the other party. Motivated by this, we propose a novel personalized dialogue generator by detecting implicit user persona. Because it's difficult to collect a large number of personas for each user, we attempt to model the user's potential persona and its representation from the dialogue absence of any external information. Perception variable and fader variable are conceived utilizing Conditional Variational Inference. The two latent variables simulate the process of people being aware of the other party's persona and producing the corresponding expression in conversation. Finally, Posterior-discriminated Regularization is presented to enhance the training procedure. Empirical studies demonstrate that compared with the state-of-the-art methods, ours is more concerned with the user's persona and outperforms in evaluations.

It is shown, with two sets of indicators that separately load on two distinct factors, independent of one another conditional on the past, that if it is the case that at least one of the factors causally affects the other, then, in many settings, the process will converge to a factor model in which a single factor will suffice to capture the covariance structure among the indicators. Factor analysis with one wave of data can then not distinguish between factor models with a single factor versus those with two factors that are causally related. Therefore, unless causal relations between factors can be ruled out a priori, alleged empirical evidence from one-wave factor analysis for a single factor still leaves open the possibilities of a single factor or of two factors that causally affect one another. The implications for interpreting the factor structure of psychological scales, such as self-report scales for anxiety and depression, or for happiness and purpose, are discussed. The results are further illustrated through simulations to gain insight into the practical implications of the results in more realistic settings prior to the convergence of the processes. Some further generalizations to an arbitrary number of underlying factors are noted.