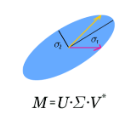

SVD (singular value decomposition) is one of the basic tools of machine learning, allowing to optimize basis for a given matrix. However, sometimes we have a set of matrices $\{A_k\}_k$ instead, and would like to optimize a single common basis for them: find orthogonal matrices $U$, $V$, such that $\{U^T A_k V\}$ set of matrices is somehow simpler. For example DCT-II is orthonormal basis of functions commonly used in image/video compression - as discussed here, this kind of basis can be quickly automatically optimized for a given dataset. While also discussed gradient descent optimization might be computationally costly, there is proposed CSVD (common SVD): fast general approach based on SVD. Specifically, we choose $U$ as built of eigenvectors of $\sum_i (w_k)^q (A_k A_k^T)^p$ and $V$ of $\sum_k (w_k)^q (A_k^T A_k)^p$, where $w_k$ are their weights, $p,q>0$ are some chosen powers e.g. 1/2, optionally with normalization e.g. $A \to A - rc^T$ where $r_i=\sum_j A_{ij}, c_j =\sum_i A_{ij}$.

相關內容

We study the scaling limits of stochastic gradient descent (SGD) with constant step-size in the high-dimensional regime. We prove limit theorems for the trajectories of summary statistics (i.e., finite-dimensional functions) of SGD as the dimension goes to infinity. Our approach allows one to choose the summary statistics that are tracked, the initialization, and the step-size. It yields both ballistic (ODE) and diffusive (SDE) limits, with the limit depending dramatically on the former choices. Interestingly, we find a critical scaling regime for the step-size below which the effective ballistic dynamics matches gradient flow for the population loss, but at which, a new correction term appears which changes the phase diagram. About the fixed points of this effective dynamics, the corresponding diffusive limits can be quite complex and even degenerate. We demonstrate our approach on popular examples including estimation for spiked matrix and tensor models and classification via two-layer networks for binary and XOR-type Gaussian mixture models. These examples exhibit surprising phenomena including multimodal timescales to convergence as well as convergence to sub-optimal solutions with probability bounded away from zero from random (e.g., Gaussian) initializations.

Functional linear and single-index models are core regression methods in functional data analysis and are widely used methods for performing regression when the covariates are observed random functions coupled with scalar responses in a wide range of applications. In the existing literature, however, the construction of associated estimators and the study of their theoretical properties is invariably carried out on a case-by-case basis for specific models under consideration. In this work, we provide a unified methodological and theoretical framework for estimating the index in functional linear and single-index models; in the later case the proposed approach does not require the specification of the link function. In terms of methodology, we show that the reproducing kernel Hilbert space (RKHS) based functional linear least-squares estimator, when viewed through the lens of an infinite-dimensional Gaussian Stein's identity, also provides an estimator of the index of the single-index model. On the theoretical side, we characterize the convergence rates of the proposed estimators for both linear and single-index models. Our analysis has several key advantages: (i) we do not require restrictive commutativity assumptions for the covariance operator of the random covariates on one hand and the integral operator associated with the reproducing kernel on the other hand; and (ii) we also allow for the true index parameter to lie outside of the chosen RKHS, thereby allowing for index mis-specification as well as for quantifying the degree of such index mis-specification. Several existing results emerge as special cases of our analysis.

We consider the problem of estimating the autocorrelation operator of an autoregressive Hilbertian process. By means of a Tikhonov approach, we establish a general result that yields the convergence rate of the estimated autocorrelation operator as a function of the rate of convergence of the estimated lag zero and lag one autocovariance operators. The result is general in that it can accommodate any consistent estimators of the lagged autocovariances. Consequently it can be applied to processes under any mode of observation: complete, discrete, sparse, and/or with measurement errors. An appealing feature is that the result does not require delicate spectral decay assumptions on the autocovariances but instead rests on natural source conditions. The result is illustrated by application to important special cases.

This paper analyzes a two-timescale stochastic algorithm framework for bilevel optimization. Bilevel optimization is a class of problems which exhibit a two-level structure, and its goal is to minimize an outer objective function with variables which are constrained to be the optimal solution to an (inner) optimization problem. We consider the case when the inner problem is unconstrained and strongly convex, while the outer problem is constrained and has a smooth objective function. We propose a two-timescale stochastic approximation (TTSA) algorithm for tackling such a bilevel problem. In the algorithm, a stochastic gradient update with a larger step size is used for the inner problem, while a projected stochastic gradient update with a smaller step size is used for the outer problem. We analyze the convergence rates for the TTSA algorithm under various settings: when the outer problem is strongly convex (resp.~weakly convex), the TTSA algorithm finds an $\mathcal{O}(K^{-2/3})$-optimal (resp.~$\mathcal{O}(K^{-2/5})$-stationary) solution, where $K$ is the total iteration number. As an application, we show that a two-timescale natural actor-critic proximal policy optimization algorithm can be viewed as a special case of our TTSA framework. Importantly, the natural actor-critic algorithm is shown to converge at a rate of $\mathcal{O}(K^{-1/4})$ in terms of the gap in expected discounted reward compared to a global optimal policy.

Due to the widespread use of complex machine learning models in real-world applications, it is becoming critical to explain model predictions. However, these models are typically black-box deep neural networks, explained post-hoc via methods with known faithfulness limitations. Generalized Additive Models (GAMs) are an inherently interpretable class of models that address this limitation by learning a non-linear shape function for each feature separately, followed by a linear model on top. However, these models are typically difficult to train, require numerous parameters, and are difficult to scale. We propose an entirely new subfamily of GAMs that utilizes basis decomposition of shape functions. A small number of basis functions are shared among all features, and are learned jointly for a given task, thus making our model scale much better to large-scale data with high-dimensional features, especially when features are sparse. We propose an architecture denoted as the Neural Basis Model (NBM) which uses a single neural network to learn these bases. On a variety of tabular and image datasets, we demonstrate that for interpretable machine learning, NBMs are the state-of-the-art in accuracy, model size, and, throughput and can easily model all higher-order feature interactions. Source code is available at //github.com/facebookresearch/nbm-spam.

We study the problem of high-dimensional sparse mean estimation in the presence of an $\epsilon$-fraction of adversarial outliers. Prior work obtained sample and computationally efficient algorithms for this task for identity-covariance subgaussian distributions. In this work, we develop the first efficient algorithms for robust sparse mean estimation without a priori knowledge of the covariance. For distributions on $\mathbb R^d$ with "certifiably bounded" $t$-th moments and sufficiently light tails, our algorithm achieves error of $O(\epsilon^{1-1/t})$ with sample complexity $m = (k\log(d))^{O(t)}/\epsilon^{2-2/t}$. For the special case of the Gaussian distribution, our algorithm achieves near-optimal error of $\tilde O(\epsilon)$ with sample complexity $m = O(k^4 \mathrm{polylog}(d))/\epsilon^2$. Our algorithms follow the Sum-of-Squares based, proofs to algorithms approach. We complement our upper bounds with Statistical Query and low-degree polynomial testing lower bounds, providing evidence that the sample-time-error tradeoffs achieved by our algorithms are qualitatively the best possible.

In this paper, a higher order finite difference scheme is proposed for Generalized Fractional Diffusion Equations (GFDEs). The fractional diffusion equation is considered in terms of the generalized fractional derivatives (GFDs) which uses the scale and weight functions in the definition. The GFD reduces to the Riemann-Liouville, Caputo derivatives and other fractional derivatives in a particular case. Due to importance of the scale and the weight functions in describing behaviour of real-life physical systems, we present the solutions of the GFDEs by considering various scale and weight functions. The convergence and stability analysis are also discussed for finite difference scheme (FDS) to validate the proposed method. We consider test examples for numerical simulation of FDS to justify the proposed numerical method.

Practical data assimilation algorithms often contain hyper-parameters, which may arise due to, for instance, the use of certain auxiliary techniques like covariance inflation and localization in an ensemble Kalman filter, the re-parameterization of certain quantities such as model and/or observation error covariance matrices, and so on. Given the richness of the established assimilation algorithms, and the abundance of the approaches through which hyper-parameters are introduced to the assimilation algorithms, one may ask whether it is possible to develop a sound and generic method to efficiently choose various types of (sometimes high-dimensional) hyper-parameters. This work aims to explore a feasible, although likely partial, answer to this question. Our main idea is built upon the notion that a data assimilation algorithm with hyper-parameters can be considered as a parametric mapping that links a set of quantities of interest (e.g., model state variables and/or parameters) to a corresponding set of predicted observations in the observation space. As such, the choice of hyper-parameters can be recast as a parameter estimation problem, in which our objective is to tune the hyper-parameters in such a way that the resulted predicted observations can match the real observations to a good extent. From this perspective, we propose a hyper-parameter estimation workflow and investigate the performance of this workflow in an ensemble Kalman filter. In a series of experiments, we observe that the proposed workflow works efficiently even in the presence of a relatively large amount (up to $10^3$) of hyper-parameters, and exhibits reasonably good and consistent performance under various conditions.

The main two algorithms for computing the numerical radius are the level-set method of Mengi and Overton and the cutting-plane method of Uhlig. Via new analyses, we explain why the cutting-plane approach is sometimes much faster or much slower than the level-set one and then propose a new hybrid algorithm that remains efficient in all cases. For matrices whose fields of values are a circular disk centered at the origin, we show that the cost of Uhlig's method blows up with respect to the desired relative accuracy. More generally, we also analyze the local behavior of Uhlig's cutting procedure at outermost points in the field of values, showing that it often has a fast Q-linear rate of convergence and is Q-superlinear at corners. Finally, we identify and address inefficiencies in both the level-set and cutting-plane approaches and propose refined versions of these techniques.

With the rapid increase of large-scale, real-world datasets, it becomes critical to address the problem of long-tailed data distribution (i.e., a few classes account for most of the data, while most classes are under-represented). Existing solutions typically adopt class re-balancing strategies such as re-sampling and re-weighting based on the number of observations for each class. In this work, we argue that as the number of samples increases, the additional benefit of a newly added data point will diminish. We introduce a novel theoretical framework to measure data overlap by associating with each sample a small neighboring region rather than a single point. The effective number of samples is defined as the volume of samples and can be calculated by a simple formula $(1-\beta^{n})/(1-\beta)$, where $n$ is the number of samples and $\beta \in [0,1)$ is a hyperparameter. We design a re-weighting scheme that uses the effective number of samples for each class to re-balance the loss, thereby yielding a class-balanced loss. Comprehensive experiments are conducted on artificially induced long-tailed CIFAR datasets and large-scale datasets including ImageNet and iNaturalist. Our results show that when trained with the proposed class-balanced loss, the network is able to achieve significant performance gains on long-tailed datasets.