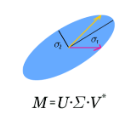

The randomized singular value decomposition (R-SVD) is a popular sketching-based algorithm for efficiently computing the partial SVD of a large matrix. When the matrix is low-rank, the R-SVD produces its partial SVD exactly; but when the rank is large, it only yields an approximation. Motivated by applications in data science and principal component analysis (PCA), we analyze the R-SVD under a low-rank signal plus noise measurement model; specifically, when its input is a spiked random matrix. The singular values produced by the R-SVD are shown to exhibit a BBP-like phase transition: when the SNR exceeds a certain detectability threshold, that depends on the dimension reduction factor, the largest singular value is an outlier; below the threshold, no outlier emerges from the bulk of singular values. We further compute asymptotic formulas for the overlap between the ground truth signal singular vectors and the approximations produced by the R-SVD. Dimensionality reduction has the adverse affect of amplifying the noise in a highly nonlinear manner. Our results demonstrate the statistical advantage -- in both signal detection and estimation -- of the R-SVD over more naive sketched PCA variants; the advantage is especially dramatic when the sketching dimension is small. Our analysis is asymptotically exact, and substantially more fine-grained than existing operator-norm error bounds for the R-SVD, which largely fail to give meaningful error estimates in the moderate SNR regime. It applies for a broad family of sketching matrices previously considered in the literature, including Gaussian i.i.d. sketches, random projections, and the sub-sampled Hadamard transform, among others. Lastly, we derive an optimal singular value shrinker for singular values and vectors obtained through the R-SVD, which may be useful for applications in matrix denoising.

相關內容

We investigate trade-offs in static and dynamic evaluation of hierarchical queries with arbitrary free variables. In the static setting, the trade-off is between the time to partially compute the query result and the delay needed to enumerate its tuples. In the dynamic setting, we additionally consider the time needed to update the query result under single-tuple inserts or deletes to the database. Our approach observes the degree of values in the database and uses different computation and maintenance strategies for high-degree (heavy) and low-degree (light) values. For the latter it partially computes the result, while for the former it computes enough information to allow for on-the-fly enumeration. We define the preprocessing time, the update time, and the enumeration delay as functions of the light/heavy threshold. By appropriately choosing this threshold, our approach recovers a number of prior results when restricted to hierarchical queries. We show that for a restricted class of hierarchical queries, our approach achieves worst-case optimal update time and enumeration delay conditioned on the Online Matrix-Vector Multiplication Conjecture.

We show that the coefficients of the representing polynomial of any monotone Boolean function are the values of the M\"obius function of an atomistic lattice related to this function. Using this we determine the representing polynomial of any Boolean function corresponding to a \stc\ problem in acyclic quivers (directed acyclic multigraphs). Only monomials corresponding to unions of paths have non-zero coefficients which are $(-1)^D$ where $D$ is an easily computable function of the quiver corresponding to the monomial (it is the number of plane regions in the case of planar graphs). We determine that the number of monomials with non-zero coefficients for the two-dimensional $n \times n$ grid connectivity problem is $2^{\Omega(n^2)}$.

We consider a non-linear Bayesian data assimilation model for the periodic two-dimensional Navier-Stokes equations with initial condition modelled by a Gaussian process prior. We show that if the system is updated with sufficiently many discrete noisy measurements of the velocity field, then the posterior distribution eventually concentrates near the ground truth solution of the time evolution equation, and in particular that the initial condition is recovered consistently by the posterior mean vector field. We further show that the convergence rate can in general not be faster than inverse logarithmic in sample size, but describe specific conditions on the initial conditions when faster rates are possible. In the proofs we provide an explicit quantitative estimate for backward uniqueness of solutions of the two-dimensional Navier-Stokes equations.

This paper investigates the utility gain of using Iterative Bayesian Update (IBU) for private discrete distribution estimation using data obfuscated with Locally Differentially Private (LDP) mechanisms. We compare the performance of IBU to Matrix Inversion (MI), a standard estimation technique, for seven LDP mechanisms designed for one-time data collection and for other seven LDP mechanisms designed for multiple data collections (e.g., RAPPOR). To broaden the scope of our study, we also varied the utility metric, the number of users n, the domain size k, and the privacy parameter {\epsilon}, using both synthetic and real-world data. Our results suggest that IBU can be a useful post-processing tool for improving the utility of LDP mechanisms in different scenarios without any additional privacy cost. For instance, our experiments show that IBU can provide better utility than MI, especially in high privacy regimes (i.e., when {\epsilon} is small). Our paper provides insights for practitioners to use IBU in conjunction with existing LDP mechanisms for more accurate and privacy-preserving data analysis. Finally, we implemented IBU for all fourteen LDP mechanisms into the state-of-the-art multi-freq-ldpy Python package (//pypi.org/project/multi-freq-ldpy/) and open-sourced all our code used for the experiments as tutorials.

This work addresses a version of the two-armed Bernoulli bandit problem where the sum of the means of the arms is one (the symmetric two-armed Bernoulli bandit). In a regime where the gap between these means goes to zero as the number of prediction periods approaches infinity, i.e., the difficulty of detecting the gap increases as the sample size increases, we obtain the leading order terms of the minmax optimal regret and pseudoregret for this problem by associating each of them with a solution of a linear heat equation. Our results improve upon the previously known results; specifically, we explicitly compute these leading order terms in three different scaling regimes for the gap. Additionally, we obtain new non-asymptotic bounds for any given time horizon. Although optimal player strategies are not known for more general bandit problems, there is significant interest in considering how regret accumulates under specific player strategies, even when they are not known to be optimal. We expect that the methods of this paper should be useful in settings of that type.

In this paper, we extend the Generalized Finite Difference Method (GFDM) on unknown compact submanifolds of the Euclidean domain, identified by randomly sampled data that (almost surely) lie on the interior of the manifolds. Theoretically, we formalize GFDM by exploiting a representation of smooth functions on the manifolds with Taylor's expansions of polynomials defined on the tangent bundles. We illustrate the approach by approximating the Laplace-Beltrami operator, where a stable approximation is achieved by a combination of Generalized Moving Least-Squares algorithm and novel linear programming that relaxes the diagonal-dominant constraint for the estimator to allow for a feasible solution even when higher-order polynomials are employed. We establish the theoretical convergence of GFDM in solving Poisson PDEs and numerically demonstrate the accuracy on simple smooth manifolds of low and moderate high co-dimensions as well as unknown 2D surfaces. For the Dirichlet Poisson problem where no data points on the boundaries are available, we employ GFDM with the volume-constraint approach that imposes the boundary conditions on data points close to the boundary. When the location of the boundary is unknown, we introduce a novel technique to detect points close to the boundary without needing to estimate the distance of the sampled data points to the boundary. We demonstrate the effectiveness of the volume-constraint employed by imposing the boundary conditions on the data points detected by this new technique compared to imposing the boundary conditions on all points within a certain distance from the boundary, where the latter is sensitive to the choice of truncation distance and require the knowledge of the boundary location.

Granger causality is among the widely used data-driven approaches for causal analysis of time series data with applications in various areas including economics, molecular biology, and neuroscience. Two of the main challenges of this methodology are: 1) over-fitting as a result of limited data duration, and 2) correlated process noise as a confounding factor, both leading to errors in identifying the causal influences. Sparse estimation via the LASSO has successfully addressed these challenges for parameter estimation. However, the classical statistical tests for Granger causality resort to asymptotic analysis of ordinary least squares, which require long data duration to be useful and are not immune to confounding effects. In this work, we address this disconnect by introducing a LASSO-based statistic and studying its non-asymptotic properties under the assumption that the true models admit sparse autoregressive representations. We establish fundamental limits for reliable identification of Granger causal influences using the proposed LASSO-based statistic. We further characterize the false positive error probability and test power of a simple thresholding rule for identifying Granger causal effects and provide two methods to set the threshold in a data-driven fashion. We present simulation studies and application to real data to compare the performance of our proposed method to ordinary least squares and existing LASSO-based methods in detecting Granger causal influences, which corroborate our theoretical results.

In uncertainty quantification, variance-based global sensitivity analysis quantitatively determines the effect of each input random variable on the output by partitioning the total output variance into contributions from each input. However, computing conditional expectations can be prohibitively costly when working with expensive-to-evaluate models. Surrogate models can accelerate this, yet their accuracy depends on the quality and quantity of training data, which is expensive to generate (experimentally or computationally) for complex engineering systems. Thus, methods that work with limited data are desirable. We propose a diffeomorphic modulation under observable response preserving homotopy (D-MORPH) regression to train a polynomial dimensional decomposition surrogate of the output that minimizes the number of training data. The new method first computes a sparse Lasso solution and uses it to define the cost function. A subsequent D-MORPH regression minimizes the difference between the D-MORPH and Lasso solution. The resulting D-MORPH surrogate is more robust to input variations and more accurate with limited training data. We illustrate the accuracy and computational efficiency of the new surrogate for global sensitivity analysis using mathematical functions and an expensive-to-simulate model of char combustion. The new method is highly efficient, requiring only 15% of the training data compared to conventional regression.

We propose an improved convergence analysis technique that characterizes the distributed learning paradigm of federated learning (FL) with imperfect/noisy uplink and downlink communications. Such imperfect communication scenarios arise in the practical deployment of FL in emerging communication systems and protocols. The analysis developed in this paper demonstrates, for the first time, that there is an asymmetry in the detrimental effects of uplink and downlink communications in FL. In particular, the adverse effect of the downlink noise is more severe on the convergence of FL algorithms. Using this insight, we propose improved Signal-to-Noise (SNR) control strategies that, discarding the negligible higher-order terms, lead to a similar convergence rate for FL as in the case of a perfect, noise-free communication channel while incurring significantly less power resources compared to existing solutions. In particular, we establish that to maintain the $O(\frac{1}{\sqrt{K}})$ rate of convergence like in the case of noise-free FL, we need to scale down the uplink and downlink noise by $\Omega({\sqrt{k}})$ and $\Omega({k})$ respectively, where $k$ denotes the communication round, $k=1,\dots, K$. Our theoretical result is further characterized by two major benefits: firstly, it does not assume the somewhat unrealistic assumption of bounded client dissimilarity, and secondly, it only requires smooth non-convex loss functions, a function class better suited for modern machine learning and deep learning models. We also perform extensive empirical analysis to verify the validity of our theoretical findings.

Inverse reinforcement learning (IRL) algorithms often rely on (forward) reinforcement learning or planning over a given time horizon to compute an approximately optimal policy for a hypothesized reward function and then match this policy with expert demonstrations. The time horizon plays a critical role in determining both the accuracy of reward estimate and the computational efficiency of IRL algorithms. Interestingly, an effective time horizon shorter than the ground-truth value often produces better results faster. This work formally analyzes this phenomenon and provides an explanation: the time horizon controls the complexity of an induced policy class and mitigates overfitting with limited data. This analysis leads to a principled choice of the effective horizon for IRL. It also prompts us to reexamine the classic IRL formulation: it is more natural to learn jointly the reward and the effective horizon together rather than the reward alone with a given horizon. Our experimental results confirm the theoretical analysis.