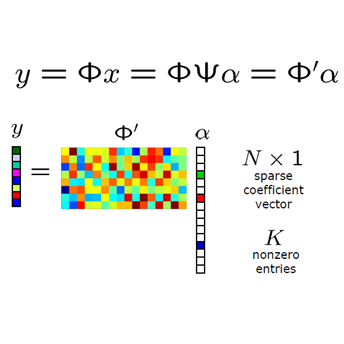

The recovery of signals that are sparse not in a basis, but rather sparse with respect to an over-complete dictionary is one of the most flexible settings in the field of compressed sensing with numerous applications. As in the standard compressed sensing setting, it is possible that the signal can be reconstructed efficiently from few, linear measurements, for example by the so-called $\ell_1$-synthesis method. However, it has been less well-understood which measurement matrices provably work for this setting. Whereas in the standard setting, it has been shown that even certain heavy-tailed measurement matrices can be used in the same sample complexity regime as Gaussian matrices, comparable results are only available for the restrictive class of sub-Gaussian measurement vectors as far as the recovery of dictionary-sparse signals via $\ell_1$-synthesis is concerned. In this work, we fill this gap and establish optimal guarantees for the recovery of vectors that are (approximately) sparse with respect to a dictionary via the $\ell_1$-synthesis method from linear, potentially noisy measurements for a large class of random measurement matrices. In particular, we show that random measurements that fulfill only a small-ball assumption and a weak moment assumption, such as random vectors with i.i.d. Student-$t$ entries with a logarithmic number of degrees of freedom, lead to comparable guarantees as (sub-)Gaussian measurements. As a technical tool, we show a bound on the expectation of the sum of squared order statistics under very general assumptions, which might be of independent interest. As a corollary of our results, we also obtain a slight improvement on the weakest assumption on a measurement matrix with i.i.d. rows sufficient for uniform recovery in standard compressed sensing, improving on results by Lecu\'e and Mendelson and Dirksen, Lecu\'e and Rauhut.

相關內容

Cai and Hemachandra used iterative constant-setting to prove that Few $\subseteq$ $\oplus$P (and thus that FewP $\subseteq$ $\oplus$P). In this paper, we note that there is a tension between the nondeterministic ambiguity of the class one is seeking to capture, and the density (or, to be more precise, the needed "nongappy"-ness) of the easy-to-find "targets" used in iterative constant-setting. In particular, we show that even less restrictive gap-size upper bounds regarding the targets allow one to capture ambiguity-limited classes. Through a flexible, metatheorem-based approach, we do so for a wide range of classes including the logarithmic-ambiguity version of Valiant's unambiguous nondeterminism class UP. Our work lowers the bar for what advances regarding the existence of infinite, P-printable sets of primes would suffice to show that restricted counting classes based on the primes have the power to accept superconstant-ambiguity analogues of UP. As an application of our work, we prove that the Lenstra-Pomerance-Wagstaff Conjecture implies that all O(loglogn)-ambiguity NP sets are in the restricted counting class $\rm RC_{PRIMES}$.

The paper introduces structured machine learning regressions for heavy-tailed dependent panel data potentially sampled at different frequencies. We focus on the sparse-group LASSO regularization. This type of regularization can take advantage of the mixed frequency time series panel data structures and improve the quality of the estimates. We obtain oracle inequalities for the pooled and fixed effects sparse-group LASSO panel data estimators recognizing that financial and economic data can have fat tails. To that end, we leverage on a new Fuk-Nagaev concentration inequality for panel data consisting of heavy-tailed $\tau$-mixing processes.

Existing long-tailed recognition methods, aiming to train class-balanced models from long-tailed data, generally assume the models would be evaluated on the uniform test class distribution. However, practical test class distributions often violate this assumption (e.g., being long-tailed or even inversely long-tailed), which would lead existing methods to fail in real-world applications. In this work, we study a more practical task setting, called test-agnostic long-tailed recognition, where the training class distribution is long-tailed while the test class distribution is unknown and can be skewed arbitrarily. In addition to the issue of class imbalance, this task poses another challenge: the class distribution shift between the training and test samples is unidentified. To handle this task, we propose a new method, called Test-time Aggregating Diverse Experts, that presents two solution strategies: (1) a new skill-diverse expert learning strategy that trains diverse experts to excel at handling different class distributions from a single long-tailed training distribution; (2) a novel test-time expert aggregation strategy that leverages self-supervision to aggregate multiple experts for handling various unknown test distributions. We theoretically show that our method has a provable ability to simulate the test class distribution. Extensive experiments verify that our method achieves new state-of-the-art performance on both vanilla and test-agnostic long-tailed recognition, where only three experts are sufficient to handle arbitrarily varied test class distributions. Code is available at //github.com/Vanint/TADE-AgnosticLT.

We study stochastic convex optimization with heavy-tailed data under the constraint of differential privacy (DP). Most prior work on this problem is restricted to the case where the loss function is Lipschitz. Instead, as introduced by Wang, Xiao, Devadas, and Xu \cite{WangXDX20}, we study general convex loss functions with the assumption that the distribution of gradients has bounded $k$-th moments. We provide improved upper bounds on the excess population risk under concentrated DP for convex and strongly convex loss functions. Along the way, we derive new algorithms for private mean estimation of heavy-tailed distributions, under both pure and concentrated DP. Finally, we prove nearly-matching lower bounds for private stochastic convex optimization with strongly convex losses and mean estimation, showing new separations between pure and concentrated DP.

In this work, we consider the algorithm to the (nonlinear) regression problems with $\ell_0$ penalty. The existing algorithms for $\ell_0$ based optimization problem are often carried out with a fixed step size, and the selection of an appropriate step size depends on the restricted strong convexity and smoothness for the loss function, hence it is difficult to compute in practical calculation. In sprite of the ideas of support detection and root finding \cite{HJK2020}, we proposes a novel and efficient data-driven line search rule to adaptively determine the appropriate step size. We prove the $\ell_2$ error bound to the proposed algorithm without much restrictions for the cost functional. A large number of numerical comparisons with state-of-the-art algorithms in linear and logistic regression problems show the stability, effectiveness and superiority of the proposed algorithms.

In this short note, we provide a sample complexity lower bound for learning linear predictors with respect to the squared loss. Our focus is on an agnostic setting, where no assumptions are made on the data distribution. This contrasts with standard results in the literature, which either make distributional assumptions, refer to specific parameter settings, or use other performance measures.

We present a dynamic algorithm for maintaining the connected and 2-edge-connected components in an undirected graph subject to edge deletions. The algorithm is Monte-Carlo randomized and processes any sequence of edge deletions in $O(m + n \operatorname{polylog} n)$ total time. Interspersed with the deletions, it can answer queries to whether any two given vertices currently belong to the same (2-edge-)connected component in constant time. Our result is based on a general Monte-Carlo randomized reduction from decremental $c$-edge-connectivity to a variant of fully-dynamic $c$-edge-connectivity on a sparse graph. While being Monte-Carlo, our reduction supports a certain final self-check that can be used in Las Vegas algorithms for static problems such as Unique Perfect Matching. For non-sparse graphs with $\Omega(n \operatorname{polylog} n)$ edges, our connectivity and $2$-edge-connectivity algorithms handle all deletions in optimal linear total time, using existing algorithms for the respective fully-dynamic problems. This improves upon an $O(m \log (n^2 / m) + n \operatorname{polylog} n)$-time algorithm of Thorup [J.Alg. 1999], which runs in linear time only for graphs with $\Omega(n^2)$ edges. Our constant amortized cost for edge deletions in decremental connectivity in non-sparse graphs should be contrasted with an $\Omega(\log n/\log\log n)$ worst-case time lower bound in the decremental setting [Alstrup, Thore Husfeldt, FOCS'98] as well as an $\Omega(\log n)$ amortized time lower-bound in the fully-dynamic setting [Patrascu and Demaine STOC'04].

This paper presents comparison of custom ensemble models with the models trained using existing libraries Like Xgboost, Scikit Learn, etc. in case of predictive equipment failure for the case of oil extracting equipment setup. The dataset that is used contains many missing values and the paper proposes different model-based data imputation strategies to impute the missing values. The architecture and the training and testing process of the custom ensemble models are explained in detail.

Alternating Direction Method of Multipliers (ADMM) is a widely used tool for machine learning in distributed settings, where a machine learning model is trained over distributed data sources through an interactive process of local computation and message passing. Such an iterative process could cause privacy concerns of data owners. The goal of this paper is to provide differential privacy for ADMM-based distributed machine learning. Prior approaches on differentially private ADMM exhibit low utility under high privacy guarantee and often assume the objective functions of the learning problems to be smooth and strongly convex. To address these concerns, we propose a novel differentially private ADMM-based distributed learning algorithm called DP-ADMM, which combines an approximate augmented Lagrangian function with time-varying Gaussian noise addition in the iterative process to achieve higher utility for general objective functions under the same differential privacy guarantee. We also apply the moments accountant method to bound the end-to-end privacy loss. The theoretical analysis shows that DP-ADMM can be applied to a wider class of distributed learning problems, is provably convergent, and offers an explicit utility-privacy tradeoff. To our knowledge, this is the first paper to provide explicit convergence and utility properties for differentially private ADMM-based distributed learning algorithms. The evaluation results demonstrate that our approach can achieve good convergence and model accuracy under high end-to-end differential privacy guarantee.

In two-phase image segmentation, convex relaxation has allowed global minimisers to be computed for a variety of data fitting terms. Many efficient approaches exist to compute a solution quickly. However, we consider whether the nature of the data fitting in this formulation allows for reasonable assumptions to be made about the solution that can improve the computational performance further. In particular, we employ a well known dual formulation of this problem and solve the corresponding equations in a restricted domain. We present experimental results that explore the dependence of the solution on this restriction and quantify imrovements in the computational performance. This approach can be extended to analogous methods simply and could provide an efficient alternative for problems of this type.